Sanitary selves

Previously known as Graphics powder (2017).

Abstract

Santiary selves is a olfactory VR installation celebrating and critiquing motion capture databases from the 2000s. Audience members are invited to engage with the project through immersive smell and sound as well as interact with the database in a virtual space. Sanitary selves seeks to question the sanitation and omission of markers of identity in the databases, asking whether purely abstract movements can exist when we are so steeped in culture.

The project first premiered at the Logan 5 Year Bash.

Team bio

The Sanitary selves team consists of lead artist Jas Brooks, essayist Cynthia Zhang, sound designer Isaac Nealey, and hardware assistant Snow Xu.

Jas Brooks is an artist and PhD candidate in the Department of Computer Science at the University of Chicago (focusing on smell & taste for VR), as well as a lecturer at the School of The Art Institute of Chicago.

Cynthia Zhang is a PhD candidate in the Department of Comparative Studies in Literature and Culture at the University of Southern California. She is a graduate of the University of Chicago, where she received her BA in Comparative Literature and her MA in Creative Writing.

Isaac Nealey is currently an undergraduate at the University of California, San Diego studying Interdisciplinary Computing and the Arts. His work and interests are wide ranging, often including computing, signal processing, digital graphics, and audio hardware fabrication. Website.

Snow Xu is an artist and developer who uses VR, AR, machine installations to explore the anatomical and affective differences between humans and machines as they confront each other. Xu explores how computers and machines are used to mediate human biases and transgression that stem from commercially marketed modes of human-machine intimacy. Her artworks exhibit at venues including Ars Electronica and the Museum of Contemporary Art Chicago. Website.

Complete description

Man, woman, if you do not want to be gay, if you do not want to play gay, that queer trifling, that feminine act of walking like a Gaf. You can become a man again, you can use your intelligence and see how it works

While doing dramaturgy on movement for Sanitary selves, I came across one of many of Kitover’s bizarre and disturbing ramblings. Although I disagree with the statement, it made me confront a very vivid experience for myself, notably the encoding of gender and sexuality in my walking. Culturally, we quickly ascribe and decode information from a person’s movement. Through stereotypes and environmental pressures, there are things such as the “feminine act of walking like a [fag],” which have – at times – been actively fought against, lofted as universal, or used to target people for bashing. Today, motion tracking technology paired with machine learning has allowed the rapid classification of a bystander’s motion to infer traits (gender, age, etc.). Intriguingly, this very present sense of Self in motion is not acknowledged in major motion capture databases from the 2000s, particularly in Carnegie Mellon University’s Graphics Lab Motion Capture Database (CMU) and University of Tokyo’s Intelligent Cooperative Systems Laboratory Action Database (ICS) . Both databases exclude any clear information about their actors. We can only glean marginal amounts of information on the actors based on a sample photo (CMU) or the 3D sample model used (ICS). As consumers, animators thus engender and apply a new ethnic and cultural backgrounds to the animation by retargeting it to their model and environment . What does it mean for these consumers to thus take these neutered motions and contextualize them once again? What are the implications of these databases being simultaneously incredibly accessible and lacking full information on actors?

I believe that these databases are incredible resources for the arts and sciences. However, I am hesitant to accept these databases at face value; I am adamant in my belief that the labs’ methodologies directly impact the aesthetics of derivative work, often unintentionally. CMU and ICS’s sanitization of markers of identity unknowingly posits the possibility of purely abstract movements: that their actors’ movements represent the generic version of that movement. Following, through Graphics powder, I sought to celebrate these databases artistically and bring attention to this emerging hypothesis. Can we have purely abstract exist when we are so steeped in culture?

I feel myself looked at by things, my activity is equally passivity… not to see in the outside, as others see it, the contours of a body one inhabits, but especially to be seen by the outside, to exist within it, to emigrate into it, to be seduced, captivated, alienated by the phantom, so that the seer and the visible reciprocate one another and we no longer know which sees and which is seen. It is this Visibility, this generality of the Sensible in itself, this anonymity innate to Myself that we have previously called flesh.

Merleau Ponty

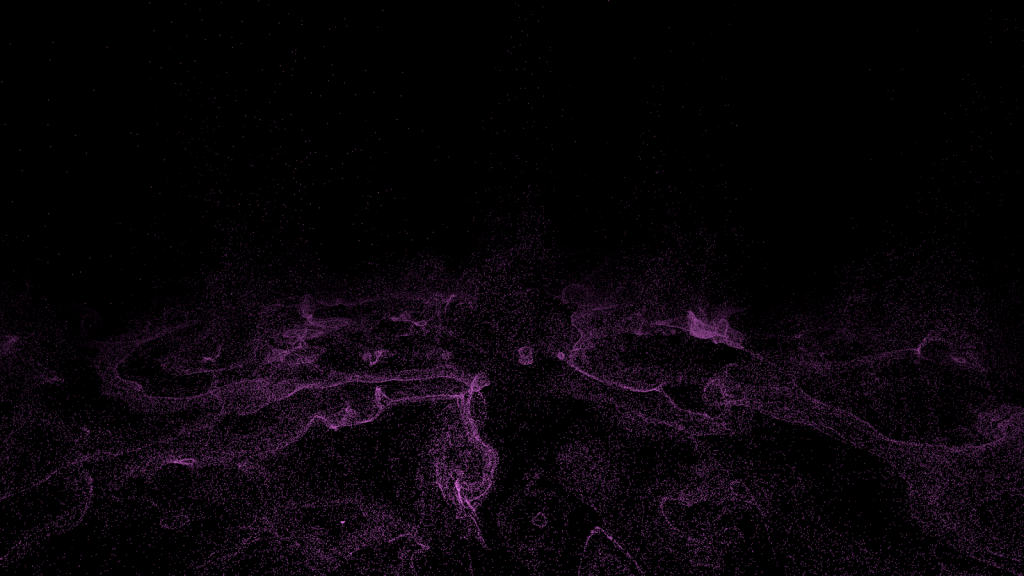

Sanitary selvesinvites audience members to directly engage with the project through immersive smell and sound as well as interact with the database in a virtual space. Within the virtual environment, the headset-wearer is immersed in a powder/snow storm that continuously falls and bounces off the ground. Scattered in the environment are moving bodies (retargeting of motions from either database) that can only be seen through the negative space formed by the body’s physical interaction with the powder. (To ease their visibility, the project added one particle at each of the body’s joints, in a color distinct from the powder. However, the bodies’ visibility remain hinged on the stereoscopic and parallax qualities of 6-DOF headsets. This makes it hard to make take a screenshot or video that does justice to the negative space formed.) When the headset-wearer gets too close to a body, it disperses and spawns a new body in another location. The headset-wearer is thus forced to observe the negative spaces from a distance and carefully approach the bodies for further inspection: their existence being unstable in the environment.

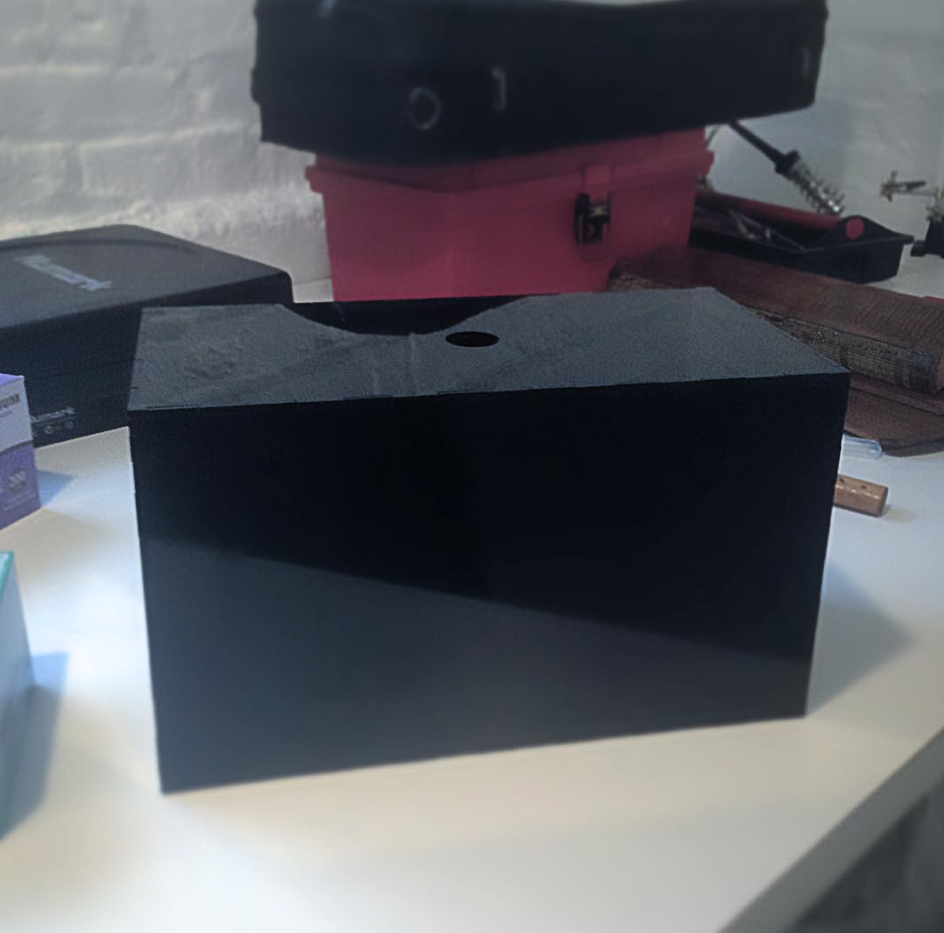

The powder itself is a visualization and scenting of the labs’ sanitization of identity markers. As the headset-wearer explores the virtual environment and observes these neutered bodies that are only visible from the sanitization particles, they inadvertently sanitize the audience. For each particle that collides with the headset-wearer’s head, a standing olfactory display releases a miniscule amount of essential oil. Three essential oils were primarily selected for their association to cleaning products and antiseptics, and – less so – their pop-spiritual associations to purification of lemon and eucalyptus as well as sexuality/gender of rosewood . (Note: I refer to these as “pop-spirituality” as most do not provide references or explanations to the grounding of their associations, or have vague tie-backs to Hinduism and Wiccan traditions through imagery.) By atomizing these essential oils according to the powder colliding with the headset-wearer, the audience experiences an olfactory sanitization, obfuscating their markers of identity in the realm of scent. From talking with various audience members, many took note of the “clean” smell in the room and often walked away with those odors still overpowering their own. A fascination for me was their olfactive awareness: they noticed the room being scented, but did not realize that they too were re-scented.

Throughout the project, 3D spatialized sound plays on loudspeakers for everyone to hear. Each body present in the virtual environment emanates the sound of tuned wind rising and falling. Paired with the odors, two spotlights, and tall plinths (5’), audience members often stayed to just observe and watch others interact in the virtual environment: almost as if they were transfixed or fascinated by the experience. Several copies of Cynthia Zhang’s “There, Yet Not: Motion Capture Technologies and the Promise of (Dis)Embodiment” were available for audience members to read at the entrance.

Acknowledgements

This artwork was graciously commissioned by the University of Chicago for the Logan 5 Year Bash, where it first premiered as Graphics powder.

CMU Graphics Lab Motion Capture Database: The data used in this project was obtained from mocap.cs.cmu.edu. The database was created with funding from NSF EIA-0196217.

ICS Action Database: The data used in this project was obtained from miubiq.cs.titech.ac.jp/action and used with permission. Copyright © ICS, The University of Tokyo, 2003-2009.

Bibliography

A backup of all pages can be accessed at the Graphics powder Webrecorder Collection. Kitover’s link is unfortunately no longer active since the original exhibition and was not archived in time.